A better depth buffer for raymarching

When doing any type of raymarching over a depth buffer, it is very easy to determine if there is no occluder – the depth in the buffer is farther away than the current point on the ray. However, when the depth in the buffer is closer you might be occluded or you might not, depending on a) the thickness of the occluder and b) if there are any other occluders behind the first one and their thickness. It seems most people assume a) is either infinite or a constant value and b) is ignored alltogether.

Since my new renderer is entirely based around screen space raymarching I wanted to improve on this to make it more accurate. This has been done before, but mostly in the context of order independent transparency (I think).

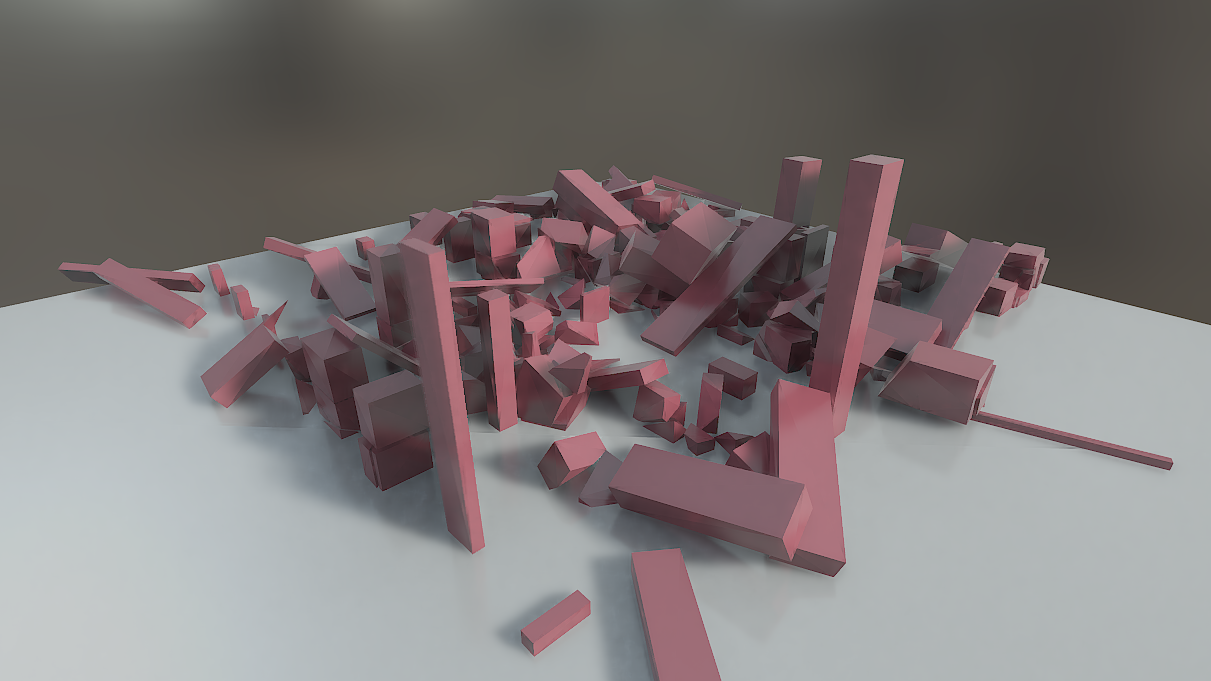

Let’s look at a scene where the occluders are assumed to have infinite depth (I have tweaked the lighting for more distinct shadows to get a better look at raymarching artefacts, so the lighting does not exactly match the environment in these screenshot).

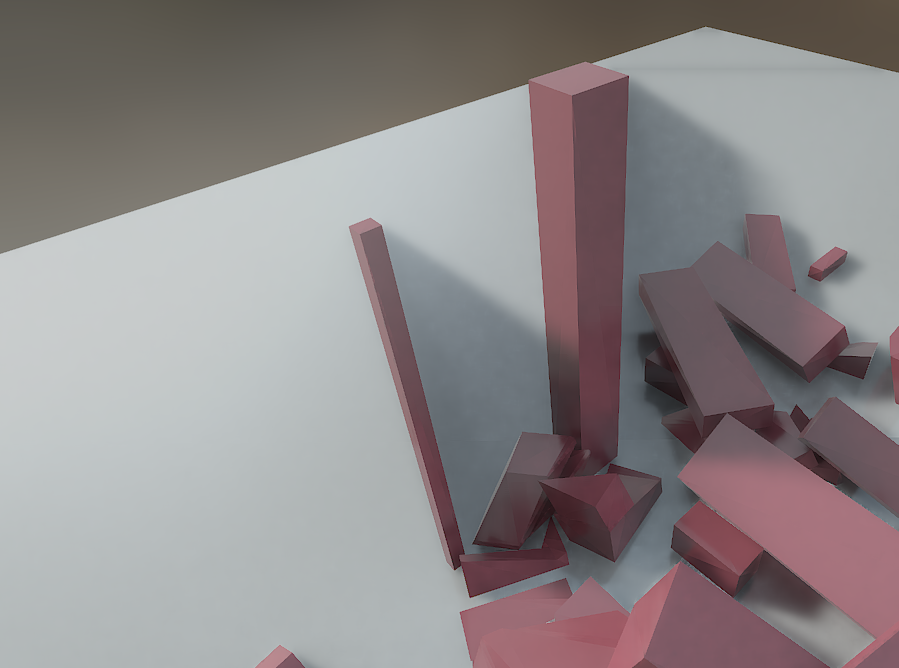

At a first glance it may look okay, but at certain angles, it is very evident that something is off:

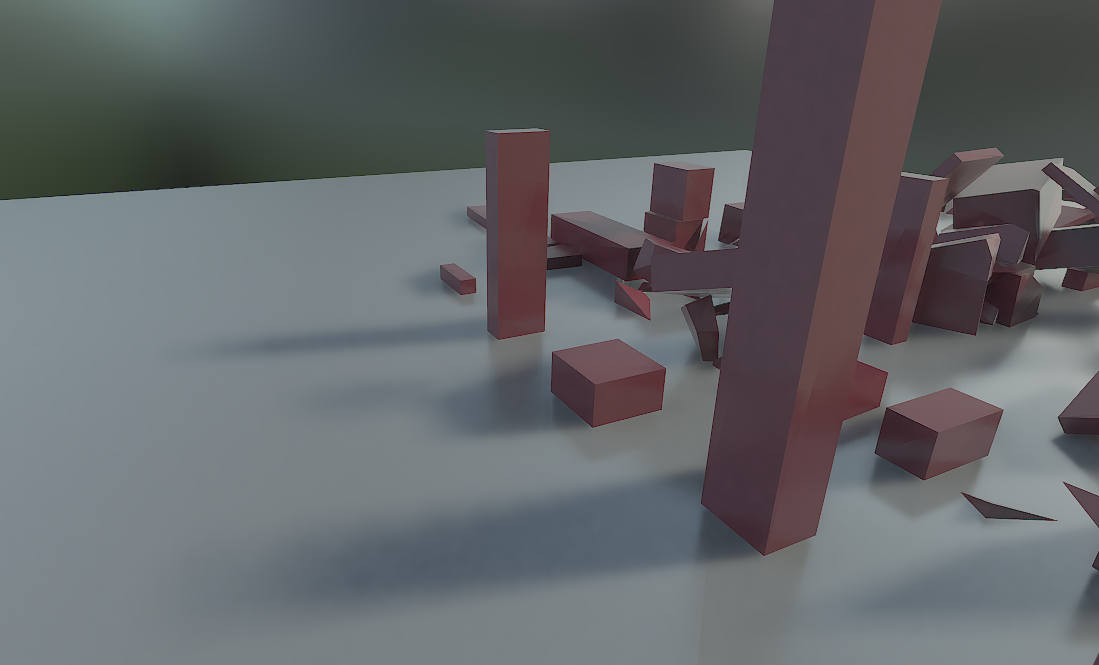

Even an object that is visibly thin will receive a shadow as if infinitely thick. The go-to trick in this situation is to hardcode a thickness and tweak until it looks acceptable:

Still artefacts, but much better. However, for most scenes it’s just not possible to find one single thickness that works for everything. What we ideally want is the actual object thickness per pixel. One relatively cheap way of approximating depth is to render a depth buffer for back faces. As long as objects don’t overlap, are closed and reasonably convex, the difference between front face depth and back face depth is actually a pretty accurate representation of the object thickness.

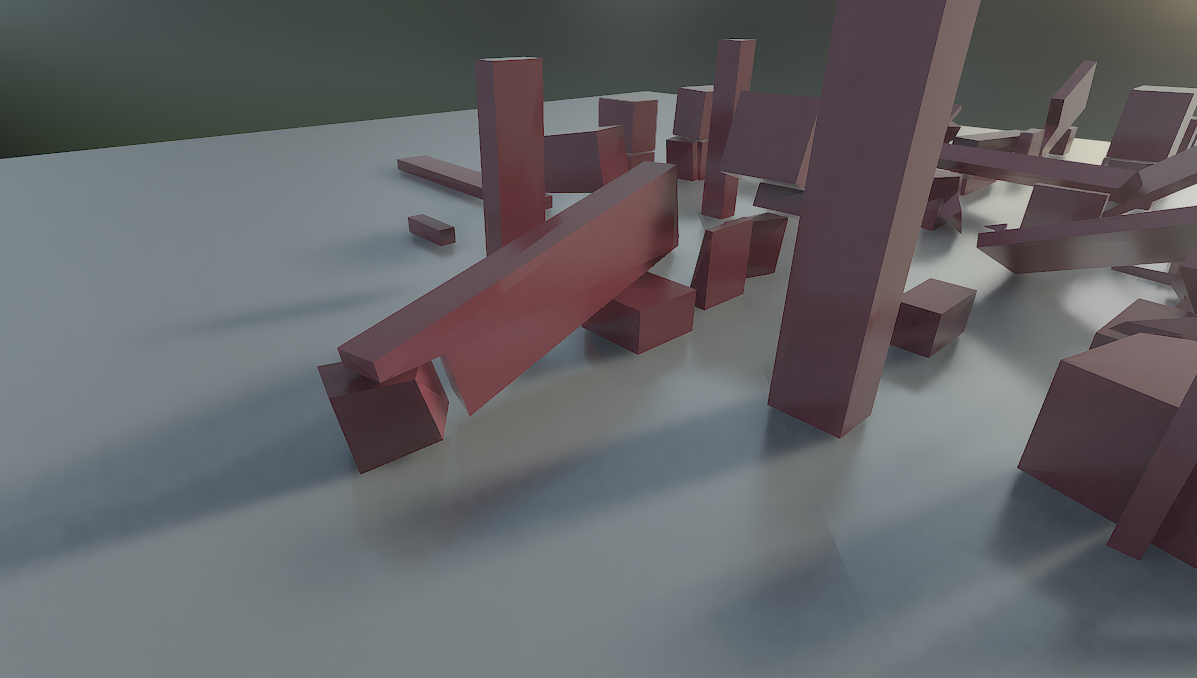

I store front face and back face depth in different channels of the same texture, so I just retrieve RG instead of R for each pixel and compare the depth to both values when raymarching, making it really cheap. This removes a lot of artefacts, but there is still room for improvement.

It is hard to visualize in a still image, but with a moving camera it becomes very clear that shadows are only visible for the first layer of objects. As soon as an object disappears behind something, its shadow is also gone. This is of course particularly evident with long shadows from, say, a sunset.

Creating another layer of depth information is called depth peeling and there are several ways to do it. I use the stencil buffer, but it can also be done by discarding fragments in a shader. I already mentioned that I store front and back face depth in two different channels of the same texture, so why not add another layer of front and back face depth and make it a full, four channel texture? All four depth values (first front, first back, second front, second back) can still be fetched as a single texture read, making it really fast.

One could imagine doing even more depth layers, but the visual improvement would be hard to notice.