Smashing tech

Our latest game Smash Hit has gone far beyond all expectations, being the #1 free game in over 100 countries during launch week and approaching 35 million downloads! I will write several blog posts about the technology here, starting with a tech summary of what is being used and then go deeper into each subject in future posts.

Physics

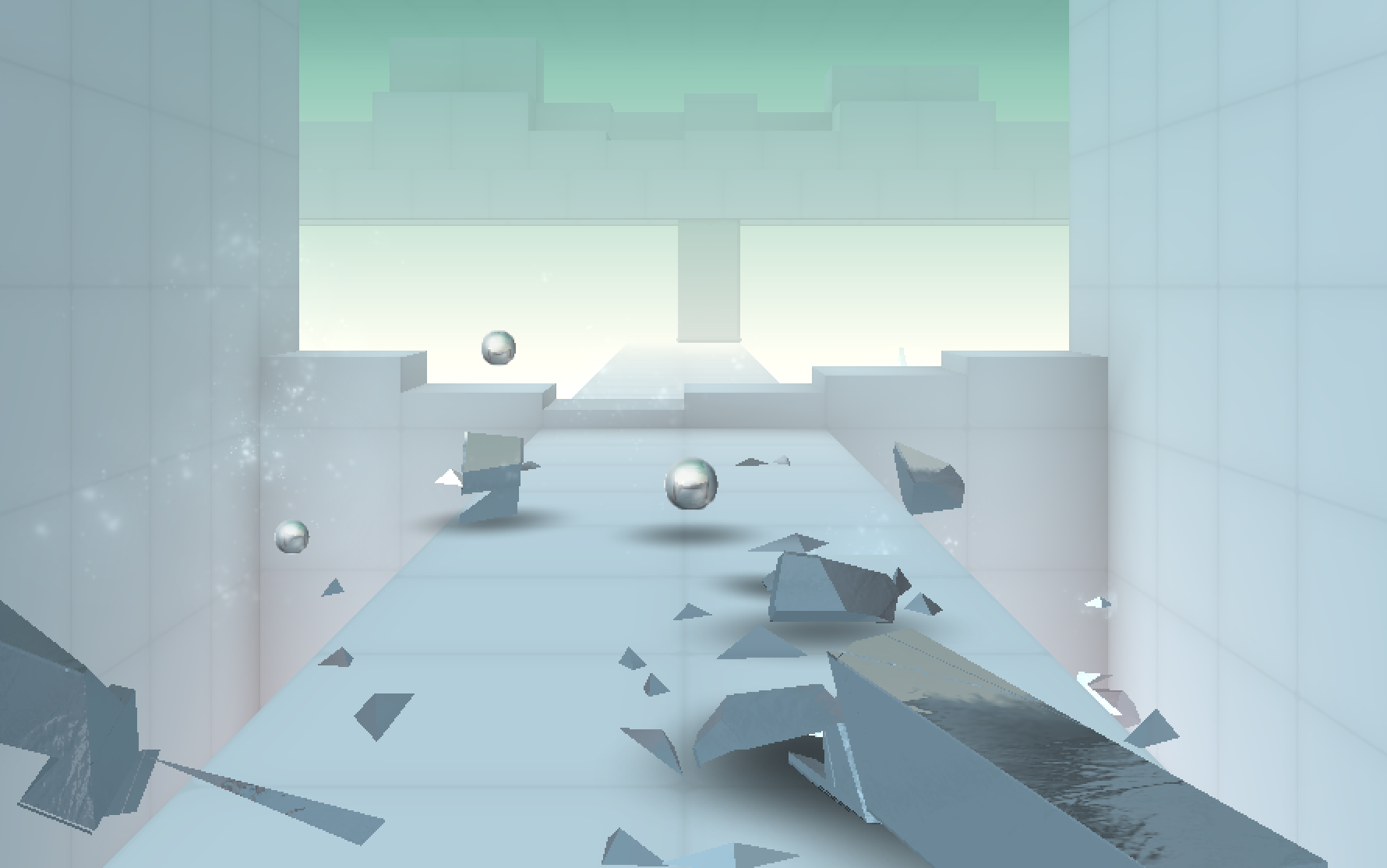

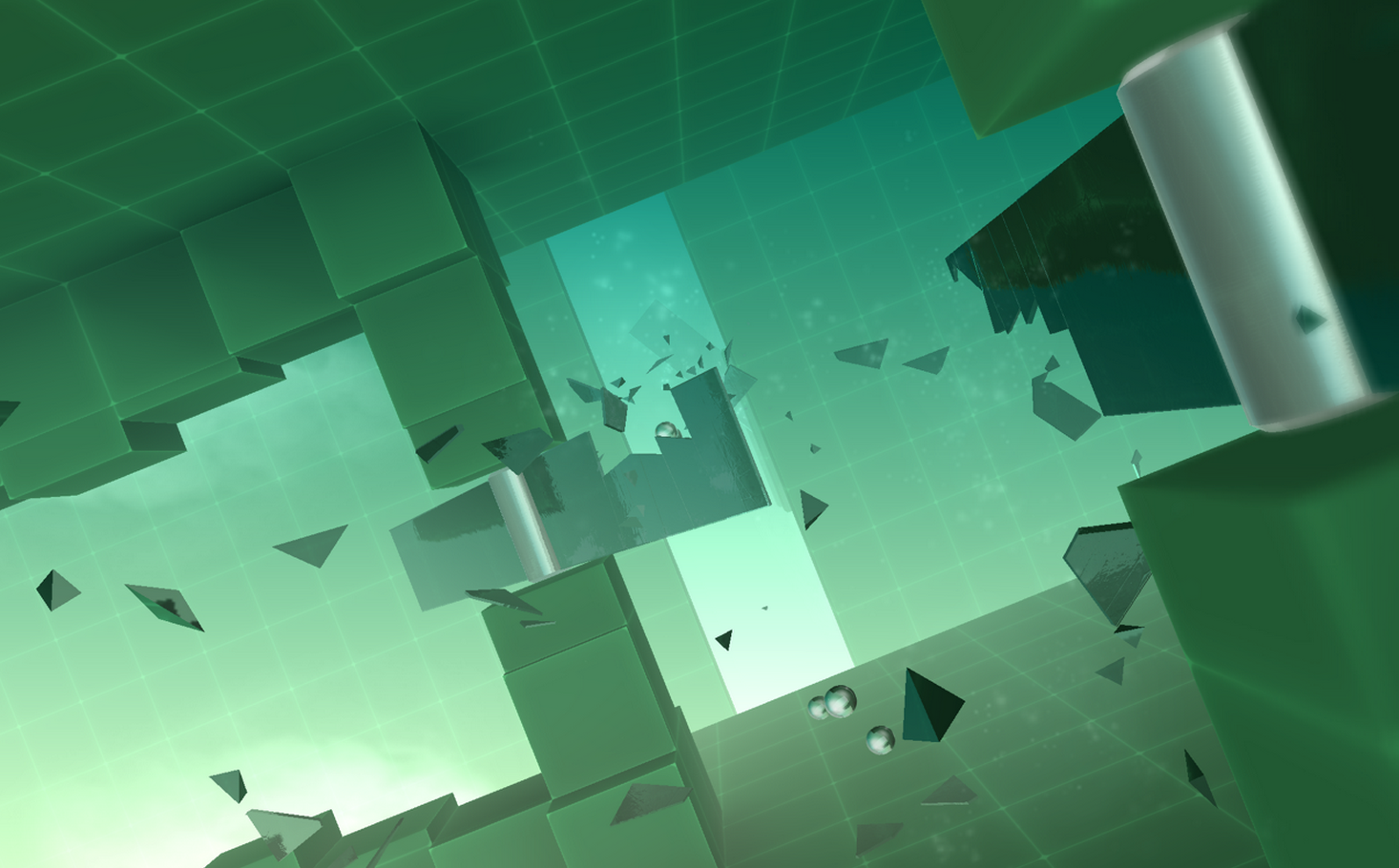

This is by far our most physics intense game to date. It’s almost like a physics playground with a game glued on top. The physics engine is tailor made for this game specifically, but builds on top of the low level physics library I was working on a few years ago. The game is actually a great show case for the low level physics library since it is very non-generic. It’s a streaming, highly dynamic world where more or less everything is moving all the time and objects get inserted and removed constantly. Therefore there is no deactivation (sleeping) or islands generation. There are also two types of objects simulated differently. The full rigid body, plus debris which are more light weight.

Destruction

Destruction is the core game mechanic and had to be fully procedural, very robust and with predictable performance. The engine supports compounds of convex shapes, like most physics engines. These shapes are then split with planes and glued back together when shattered. Though most objects in the game are flat, the breakage actually support full 3D objects with no limitations. The breaking mechanic is built into the core solver, so that objects can break in multiple steps during the same iteration. This is essential for good breakage of this magnitude.

Graphics

Due to the highly dynamic environment where there can be hundreds of moving objects at the same time, one draw call per object was not an option. Instead, all objects are gathered into dynamic vertex buffers. So there are basically only one draw call per material. Vertex transformation is done on the CPU to offload the GPU and allow culling before vertices and triangles are even sent to the GPU. CPU transformation also opens up for a few other tricks not available with conventional rendering. The camera is facing the same direction all the time, which allows the use of billboards to approximate geometry. You can see this in a few instances for round shapes in particular throughout the game.

Shadows

The static soft shadows are precomputed vertex lighting based on ambient occlusion. Lighting is quadratically interpolated in the fragment shader for a natural falloff. The dynamic soft-shadows are gaussian blobs rendered with one quad per rigid body. The size and orientation of the shadow need to be determined in run-time since an object can break arbitrarily. I’m using the inertia tensor of the rigid body to figure this out, and the shadow is then projected down on a plane using a downward raycast. This is of course an enormous simplification, but it looks great in 99% of all cases!

Music and sound

I wrote my own software mixing layer for this game, which enables custom sound effects processing for environmental acoustics. I use a reverb, echoes and low-pass filters with different settings for each environment in the game. The music is made out of about 30 different patterns, each with an intro and an outro, which are sample-correct mixed together during the transitions. The camera motion is synchronized to the music progression, so the music always switches to the next pattern exactly when entering a new room. This was pretty hard to get right, since this had to be done independent of the main time stepping in order to support slower devices. Hence, camera motion and physics simulation had to be completely decoupled in order to have both predictable simulation and music synchronization on all devices.

Scripting

Scripting has been a very useful tool during the development of this game. Each obstacle in the game is built and animated using a separate lua script. Since each obstacle is procedurally generated, it allows us to make many different varations of the same obstacle. For instance configuring width, height and color, or number blades in a fan, etc. Each obstacle runs within its very own lua context, so it is a completely safe sandbox environment. I’ve configured lua to minimize memory consumption, and implemented an efficient custom memory allocator, so each context only requires a single memory block of about 40 kb, and there are a few dozed of them active at the same time at most. Garbage collection is amortized to only run for one context each frame, so performance impact is minimal.

Performance

The game is designed for multicore devices and uses a fork-and-merge approach for both physics and graphics. I was considering putting the rendering on a separate background thread, but this would incur an extra frame of latency, which is really bad for an action game. The audio mixing and sounds decoding is done on separate threads.

If there is any area you find particularly interesting, let me know!